At Universal Yums our team manages a high scale WooCommerce site (6+ million orders). I always enjoy hearing how other teams manage their development and deployment workflow, and thought I’d share what works well for us.

Version Control

We keep the entire site (excluding WordPress core files) in version control. It might seem odd to version control all third-party plugins- but this ensures everyone on the team runs the same code and allows us to rollback the entire site to a previous state if something breaks.

Here’s an example .gitignore file showing how to exclude core files.

Project Management

We use GitHub to host our code and manage development.

Each feature or bug report is submitted as an Issue. We prioritize and assign Issues in the GitHub Project view. As Issues are worked on they are assigned a status of “Ready for Development”, “In Progress”, “In Review” or “Done”.

We do our best to scope all development work to tasks that can be completed in a week or less. This helps ensure frequent feedback and merges into the main codebase.

Limiting scope to a week is a forcing function to help us identify larger projects and break them down into smaller tasks. Many times we’ll release an MVP first (or keep it hidden from customers behind a feature flag) and then continue to iterate until it is feature complete. The development approach we took to rebuilding our theme is a good example.

Local Development

Our team is not proscriptive about the local development environment. My thinking is that people should use the tool that works best for them. Most members of the team develop on a Mac, but we have one on Windows.

For local development environment, half our team uses Laravel Valet and the other half uses Local WP. For IDEs, half the team uses VS Code and the other half uses PHP Storm.

Teams that manage a lot of sites in different server environments might find that a highly consistent Docker environment works better, but for our team managing a single site this works quite well.

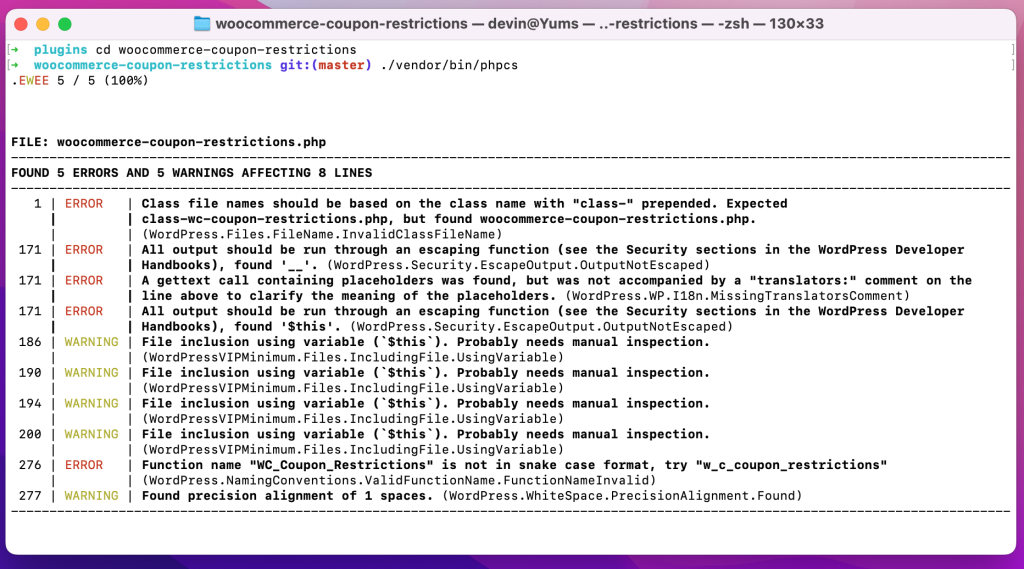

To ensure consistent coding standards, we have PHPCS configured and everyone has their IDE set to autofix on save.

Syncing Production to Local

In the past I’ve used custom bash scripts to export the database from production, import it locally, and update the URLs (which works great for smaller sites!).

However, with our current database size over 100 GB, this isn’t feasible. Instead, we export the production database to a separate server instance where we remove customers (from the users table), orders (from the posts table) and order meta (from the postmeta table)- which gives us a clean and lean database (less than 1GB) which we use for local development.

Although it would be ideal to have an exact replica to work with locally, this works fairly well for us. We always give code a secondary review in a staging environment with the full dataset before release to check for performance bottlenecks we may not have noticed locally.

Development Workflow

We continuously deploy code during the work week as pull requests (PRs) are approved. Most days we have anywhere between 2 and 12 releases.

New features or bugfixes are branched off main and worked on locally. When code is ready for testing and review, a new PR is made. Automated tests are run on Travis CI whenever code is pushed to a PR.

We use WP Engine for hosting, so it’s fairly easy to refresh our hosted development and staging environments with the latest data. However, due to our database size it does take a few hours, so we rarely refresh more than a couple times per month. (My ideal setup would be to spin up a fresh environment for testing each PR individually, but I don’t know of a quick and efficient way to do this without investing a huge amount of time in infrastructure.)

We do our deployments using Buddy (which is a fantastic tool). It runs our build steps (composer, npm) and then deploys changes. We autodeploy updates merged to the dev or stage branch.

If the code works well in our development environment, the PR is assigned over to another developer on the team for code review and a second round of testing our staging environment. The branch protection rules in GitHub require passing tests and code review approval before code can be merged to main.

Deployments to production are manual (i.e. log into Buddy and press a button). If a bug does happen to production, Buddy allows us to easily rollback to an earlier changeset while we fix the issue.

Pushing from Stage to Production

I see this question a lot from people who are just starting to work with WooCommerce: “How can I push my changes from staging to production?”

Although it’s easy to push code changes, database changes are really tricky. Order data can flow in at any moment so it’s never safe to completely overwrite the posts or postmeta table.

If a site is developed using a page builder and the post editor, the option is either to make the changes first in stage and then re-implement in production, or export and re-import the individual pages.

We use WP All Import to import new products to staging for testing. If everything looks good, we import to production.

When settings or options changes need to go out with code updates, we’ll generally script those out using a WP CLI script and run the script as soon as deployment finishes (this also makes it easier to test a PR locally and in the different environments).

What’s Your Workflow Look Like?

This workflow works well for us as a team of five, but there would definitely be some challenges if we were to grow much bigger- especially in terms of having a shared development and staging environment. What’s your workflow look like? Any major differences?